Embracing Change Through Telework: Working From Home Isn’t What We Expected, but It’s What We Have With Coronavirus

April 15, 2020

Speech to Text Solutions That Reduce Physician Burnout

June 30, 2020The Magic behind Speech Recognition: Are Mobile Devices Getting Better at Recording Our Voice?

When we think of speech recognition, we think of speaking to Siri, Alexa, Cortana, and Google on our mobile devices. From checking the weather to the latest news, typing our searches is the past and voice-interfacing–that is, speaking what you are looking for rather than typing–is the now. We are enchanted by how little we need to do to get an answer or write it down without actually typing anything. However, we don’t necessarily think about What makes talking to my phone actually work? What’s the magic?

In the last decade, speech recognition has improved dramatically. The accuracy rate is on a steady upward path and more products are voice enabled. Speech technology is getting better and better. However, speech recognition is not a single player, rather an orchestra of instrumentalists creating coordinated sound. Beyond the cacophony, audible sound must be discernable. Speaking to your car or your mobile app works, because, like orchestra players, microphones are vital to creating the music. There is a harmonious interplay between voice, hardware, multi-faceted machine and Math models resulting in a highly-accurate transcription of your voice.

Speech recognition research tackles the particulars of the models–such as design, learning rates, and more–making sense of the audio and how audio is captured. The audio input is just as critical. Microphones have improved just the same as model designs, machine computational processing power, and learning. Today’s mobile devices are robustly designed to enrich our lives with cameras, video, internet, and voice. Devices do not just have one microphone but several. The concept known as a microphone array is part of the reason why talking to your phone is getting better. Like orchestra sections, microphones are working together to capture voice clearly and from a distance. The model mathematics and microphones figure out what is noise and filters it.

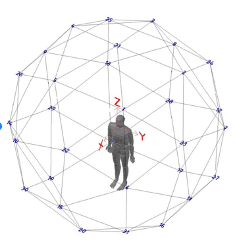

Microphone arrays entail several microphones working together. How they align begins the conversation about its collective geometry. Depending where in space the sound is coming from, microphones need to align in a specific way to hear or record it. An example of a complex microphone array geometry is below. To capture all nuances of sound, the array was set up to facilitate a study about Mozarabic Chant, a longtime Hispanic rite tradition since the Middle Ages.

Microphone Array Geometry

For the everyday microphone array, today’s sophisticated, smart mobile devices, like Apple’s iPhone 11, have several microphones designed to capture your voice from a certain distance and more.

Today’s mobile device microphone arrays improve voice dictation.

Research and development continue to make improvements on various techniques around microphone geometry, its hardware, and more sophisticated algorithms to process the audio input more accurately. Voice-enabled interfacing devices are on a path forward. And with all that in mind, Athreon’s best mobile apps are designed based on current and future mobile device capabilities to capture your voice and transcribe it swiftly. Depending on your industry, Athreon’s highly-configurable security protocols and workflows make the work seamless. You get your life back while getting more work done. The magic is in the collaboration between Athreon, mobile devices, AI, and you.

If you would like a demonstration on what our mobile apps can do, contact us. If you have questions on whether your current mobile device will meet your needs, including for Telehealth and Telemedicine work, contact us for a free consultation.